Past weekend I encountered a situation where there was insufficient disk space due

to logfull errors. As usual I identified the log file that needed to be shrunk

and attempted to shrink the file. However, to my surprise it didn’t want to

shrink and threw the following error.

Msg 3023, Level 16, State 2, Line 1

Backup, file manipulation operations (such

as ALTER DATABASE ADD FILE) and encryption changes on a database must be

serialized. Reissue the statement after the current backup or file manipulation

operation is completed.

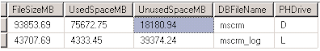

Following is the screenshot of the unused space of the database files

For a moment I thought it was something wrong with the

database. But later realised that there were conflict of interest to the

logfile usage and whatever the operation that was using it.

This could have been caused due to active transaction in the

transaction log due to replication,mirroring , logshipping , some

datamilipulation or a backup in progress. Within moments it was obvious it was

the backup that was executing longer than usual. But for a database backups to

take so long I knew the transaction log had to be hammered considerably. The

database in concern had replication configured and later found that the staging

process was in full flow which might have caused the backup process to take

long time in taking a consistent state of the database.

As soon as the backup came to a halt the shrink process succeeded.

Comments

Post a Comment